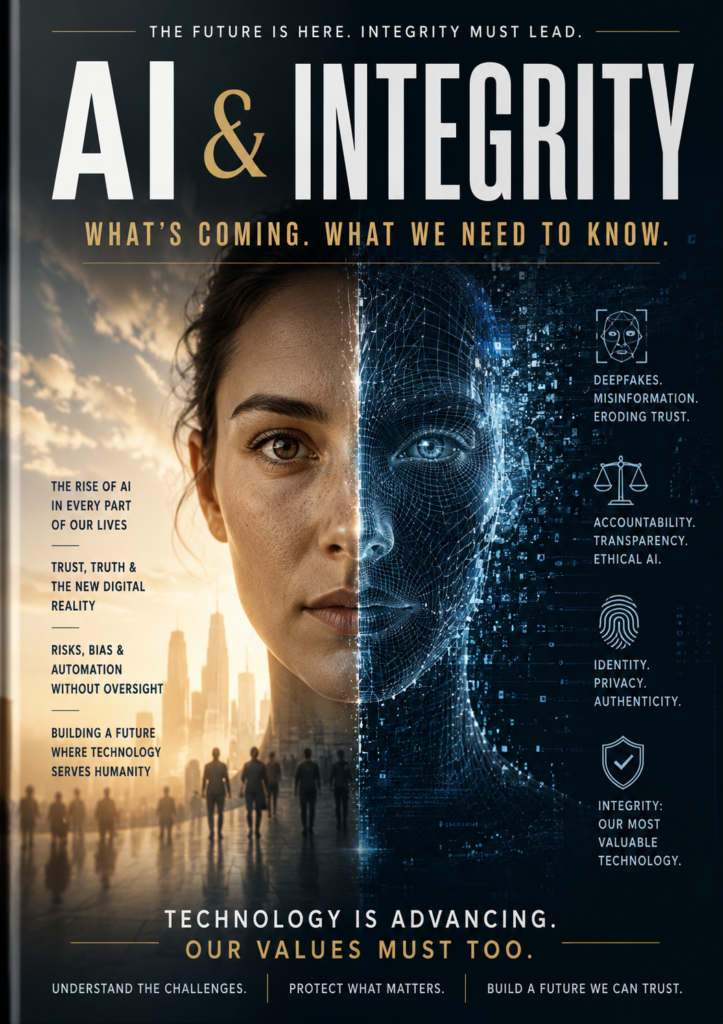

Artificial intelligence is moving faster than most people expected. The question is no longer whether AI will change the world — it already is. The real question now is whether integrity can keep up with the technology.

AI is beginning to shape hiring decisions, medical advice, education, investing, advertising, customer service, political messaging, and even personal relationships. At the same time, the line between what is real and what is artificial is becoming harder to recognize. Deepfakes, synthetic voices, AI-written content, and automated persuasion systems are no longer future concepts — they are active parts of daily life. (Axios)

That creates a new challenge for society: trust.

For decades, people relied on visual proof, recognizable voices, documents, and professional authority as signals of credibility. AI is now capable of imitating nearly all of them. A fake video can look real. A cloned voice can sound authentic. An AI-generated article can appear professionally written even when parts of it are inaccurate or misleading. Experts are warning that AI-generated misinformation and impersonation are beginning to erode digital trust worldwide. (ΑΙhub)

Integrity may become one of the most valuable assets in the AI era.

The companies, creators, schools, media platforms, and governments that survive long term may not simply be the ones with the most powerful AI systems. They may be the ones people still trust. Transparency, accountability, and verification are rapidly becoming competitive advantages instead of optional ethics policies.

One growing concern is “automation bias” — the tendency for humans to trust AI outputs even when they are wrong. Researchers and business analysts are warning that organizations may begin relying too heavily on AI-generated decisions, reports, recommendations, or approvals without proper human oversight. (www.hoganlovells.com)

This raises difficult questions:

- If AI helps write an article, who is responsible for errors?

- If AI screens job candidates, who is accountable for bias?

- If AI creates fake evidence, how do courts verify truth?

- If AI persuades people emotionally, where is the ethical line?

- If AI can imitate anyone, what happens to identity itself?

These are no longer hypothetical discussions. Governments worldwide are already moving toward stricter AI regulation, especially around deepfakes, misinformation, privacy, and synthetic media. The European Union, for example, is expanding rules targeting harmful AI-generated content and requiring stronger disclosure standards. (El País)

But regulation alone may not solve the problem.

Technology historically moves faster than law. By the time rules are created, the systems are already more advanced. That means integrity may increasingly depend on culture, leadership, and individual responsibility as much as legal enforcement.

Businesses will likely need stronger verification systems.

Schools may need to teach “AI literacy” alongside reading and math.

Employers may start valuing critical thinking more than memorization.

Media companies may need authentication systems for content.

Consumers may need to question everything they see online.

The future may belong to people who know how to work with AI without surrendering human judgment to it.

There is also another side to this conversation that often gets overlooked.

AI itself is not automatically unethical. In many ways, it could improve fairness, accessibility, efficiency, and education when used responsibly. AI can assist doctors, help small businesses compete, support disabled individuals, speed up research, and give people access to information that was once difficult to obtain. The danger is not necessarily the technology itself — it is what happens when speed, profit, influence, or convenience outrun accountability.

Some experts now argue that “trust infrastructure” may become as important as technological infrastructure in the AI economy. (Innovaccer)

In other words, the future of AI may not be decided solely by who builds the smartest systems.

It may be decided by who protects truth, credibility, and human integrity while using them.

The next few years could determine whether AI becomes a tool that strengthens society or one that weakens confidence in nearly everything people see, hear, and believe. The technology is advancing either way.

Now the real test begins:

Can integrity evolve as fast as artificial intelligence?

The Grey Ghost